- #TWO SAMPLE TWO TAILED HYPOTHESIS TEST CALCULATOR PDF#

- #TWO SAMPLE TWO TAILED HYPOTHESIS TEST CALCULATOR SOFTWARE#

- #TWO SAMPLE TWO TAILED HYPOTHESIS TEST CALCULATOR CODE#

#TWO SAMPLE TWO TAILED HYPOTHESIS TEST CALCULATOR CODE#

If sample size was known, we could use the code above to calculate power simply by specifying n with sample size and passing power as NULL. Try testing the R code with different specifications: set different parameters to NULL and see what values are calculated for different settings. Scientists usually test a few more samples up to 20 (in case some produce poor-quality data), so if you have been in research long enough to wonder where the magic group size 20 comes from, it comes from the delta:sd ratio. It works out that when the ratio of delta:sd = 1, the minimum number of samples needed for each of two independent groups is 17 (with rounding up). In experimental research, scientists don’t often know how big an effect might be or how variable it is, so sample size calculations are often based on the ratio of the effect size to its variability. That is, the test considers the hypothesis that group 1 values could be either greater or smaller than group 2 values, and not only greater or only smaller. The sample size calculation is constructed to find a difference between two independent groups ( type="two.sample") for a two sided test ( alternative="two.sided"). Here, we calculate the sample size required to detect a between-group difference of 50% when standard deviation of the difference is also 50%, tolerating false positives 5% of the time ( sig.level=0.05) with the probability of not committing Type II error as 80% ( power=0.8). The power.t.test() function requires one of the parameters n, delta, sd, sig.level or power to be passed as NULL so that this parameter can be calculated. Type="two.sample", alternative="two.sided") In R, that function is pt().# Comment next line if stats already installed

#TWO SAMPLE TWO TAILED HYPOTHESIS TEST CALCULATOR PDF#

That function represents the area under the curve of the t-statistic PDF for greater than or equal to your observed t-statistic.

#TWO SAMPLE TWO TAILED HYPOTHESIS TEST CALCULATOR SOFTWARE#

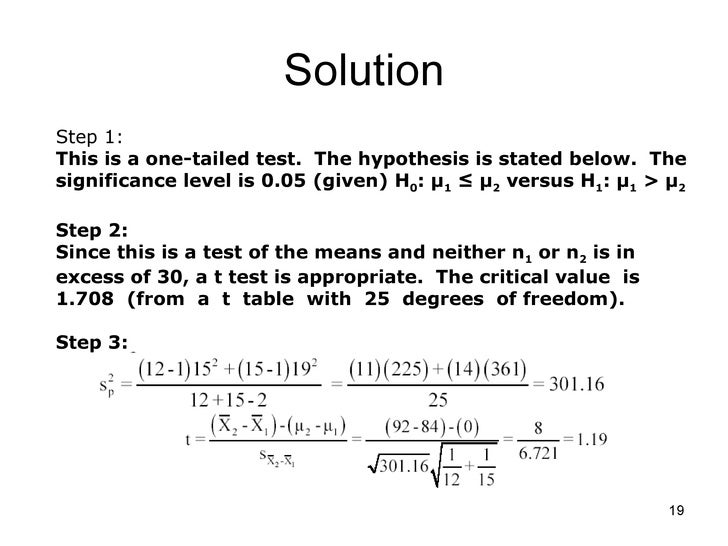

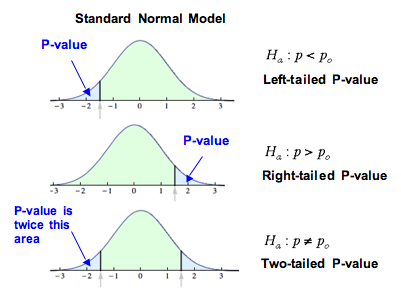

Instead, we have historically printed out tables of already-calculated p values for a range of degrees of freedom and t-statistics (you are likely to find such a table in the back of your intro to stats text book), or included algorithms in our software that do the heavy lifting of the p value calculation for us, as is suggested by the p() function in your question. That's a lot of intense calculation for what is otherwise a pretty simple test most of us casual t-test users are not up for that kind of time investment. Since the distribution is symmetrical, you don't have to separately calculate that - it will be exactly the same, so you can just double the value you got from 0.6595 to positive infinity. This gives you the area under the curve from 0.6595 up, but for a two-tailed test you also want it from -0.6595 down. You would enter these as the values over which to integrate, and then crunch through the calculus to evaluate the integral of the t PDF function. You want to get the area under the curve from 0.6595 up, i.e. In your example, your t statistic is 0.6595. Where nu (looks sort of like a "v") is the number of degrees of freedom and Gamma (looks sort of like an upside down "L") is the gamma function. Here's the function that defines the t-distribution (its probability density function, or PDF): If you've taken calculus, you may already know what needs to be done: The area under a curve is the integral of the function that defines the curve. Since the distribution is symmetrical, you can simply double that value to get the two-tailed p-value. So in order to calculate the p value that corresponds to a particular t-statistic at some degrees of freedom, you need to measure the area under the curve from that point on out. The p value is simply the proportion of the distribution - the area under the curve - that is at least as far from 0 as your t-statistic. way out in one of the tails), the you conclude that it is unlikely to have come from the null distribution. That mens you can compare that t-statistic to the rest of its null distribution - if it's a very unusual value for that distribution (i.e. So as long as you know how many degrees of freedom you have, you know theoretically what distribution your t-statistic came from under the null hypothesis.

There is a defined theoretical distribution of t-statistics (the t distribution).

If you want a one-tailed p value, then it's what proportion of t-statistics (for those degrees of freedom) are that high or higher (for the positive tail) or that low or lower (for the negative tail). The p value in a t-test (any t-test, not just two independent samples) refers to what proportion of t-statistics (for those degrees of freedom) are that extreme or more, assuming you want a two-tailed p value. First, a little background on the meaning of a p value